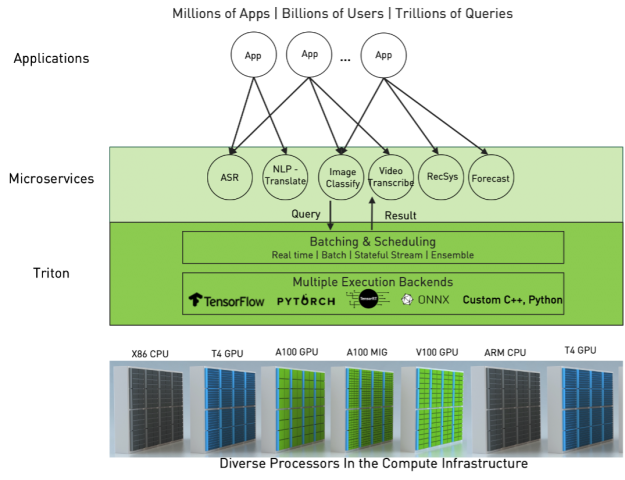

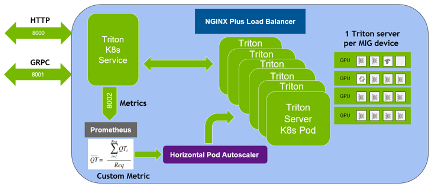

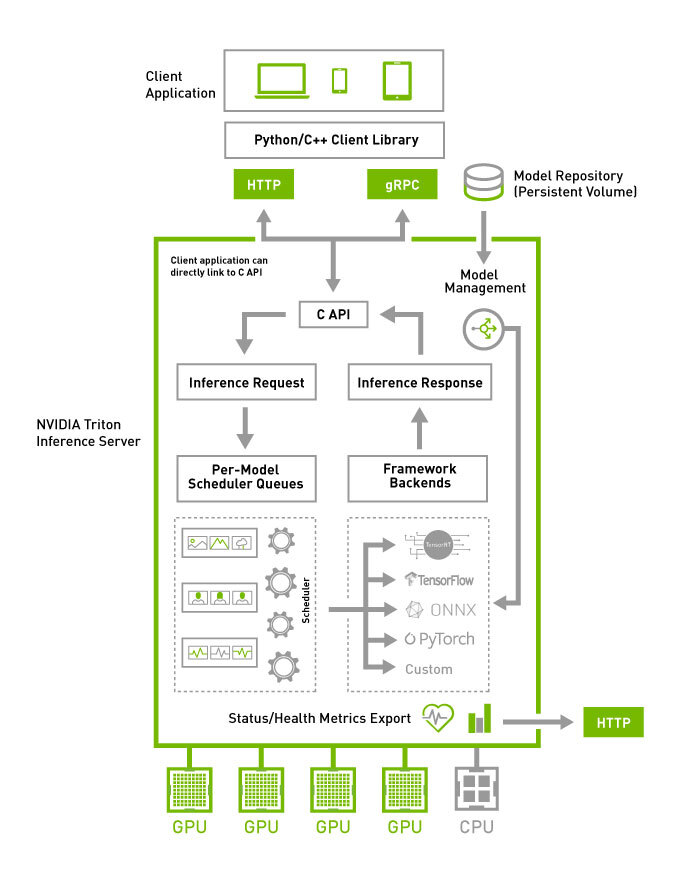

Achieve hyperscale performance for model serving using NVIDIA Triton Inference Server on Amazon SageMaker | AWS Machine Learning Blog

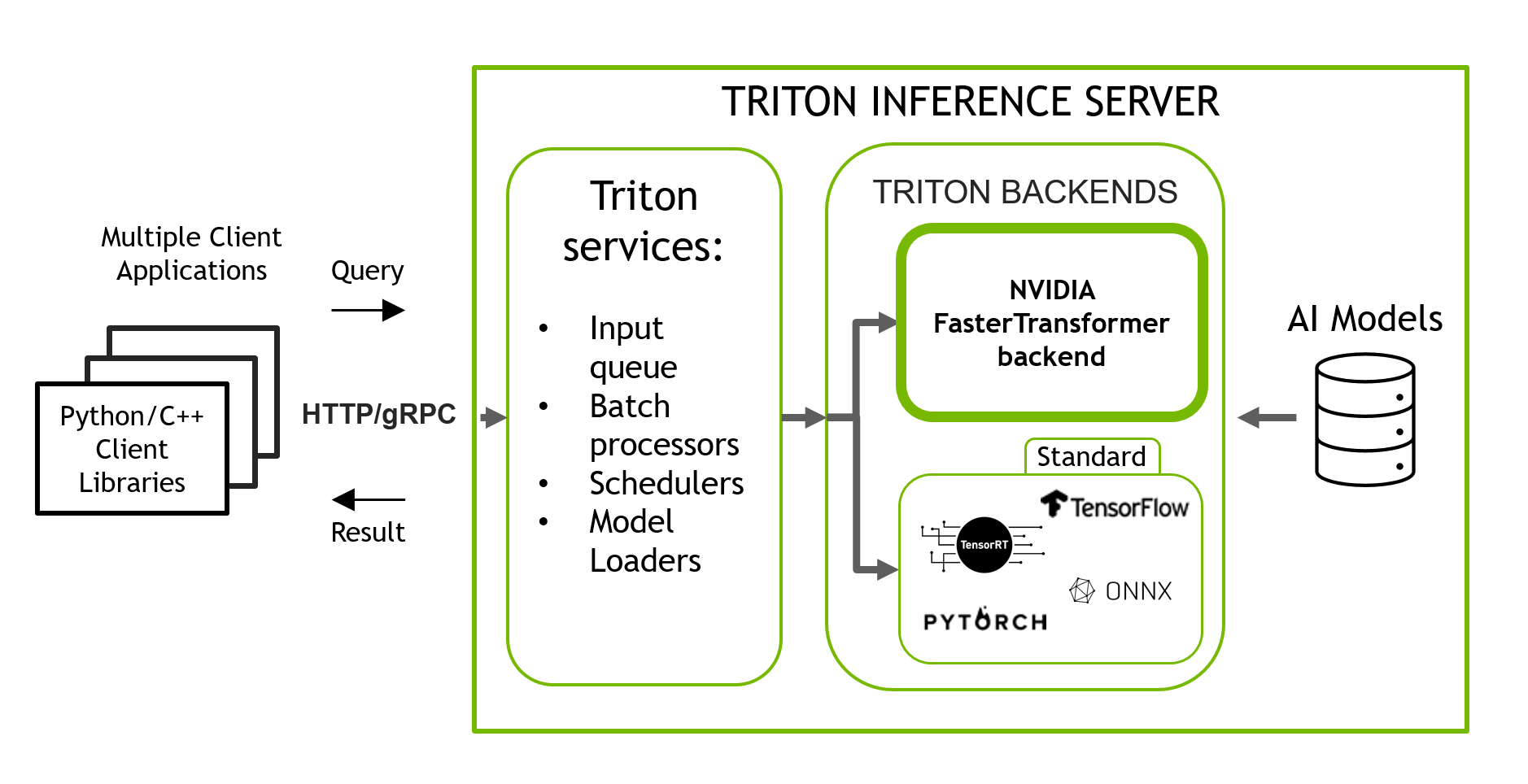

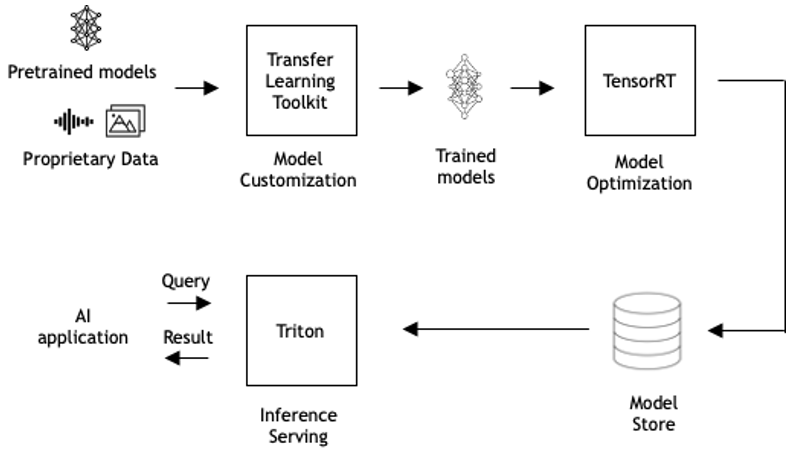

Serving and Managing ML models with Mlflow and Nvidia Triton Inference Server | by Ashwin Mudhol | Medium

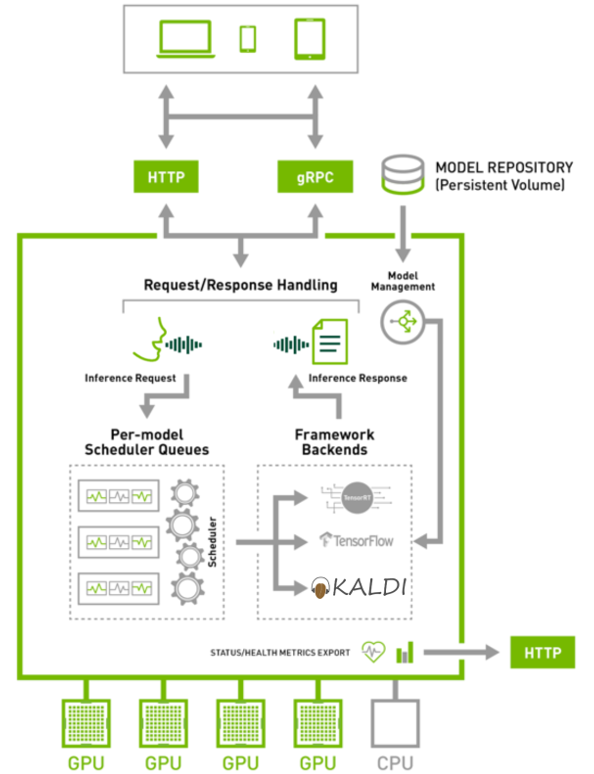

Deploy fast and scalable AI with NVIDIA Triton Inference Server in Amazon SageMaker | AWS Machine Learning Blog

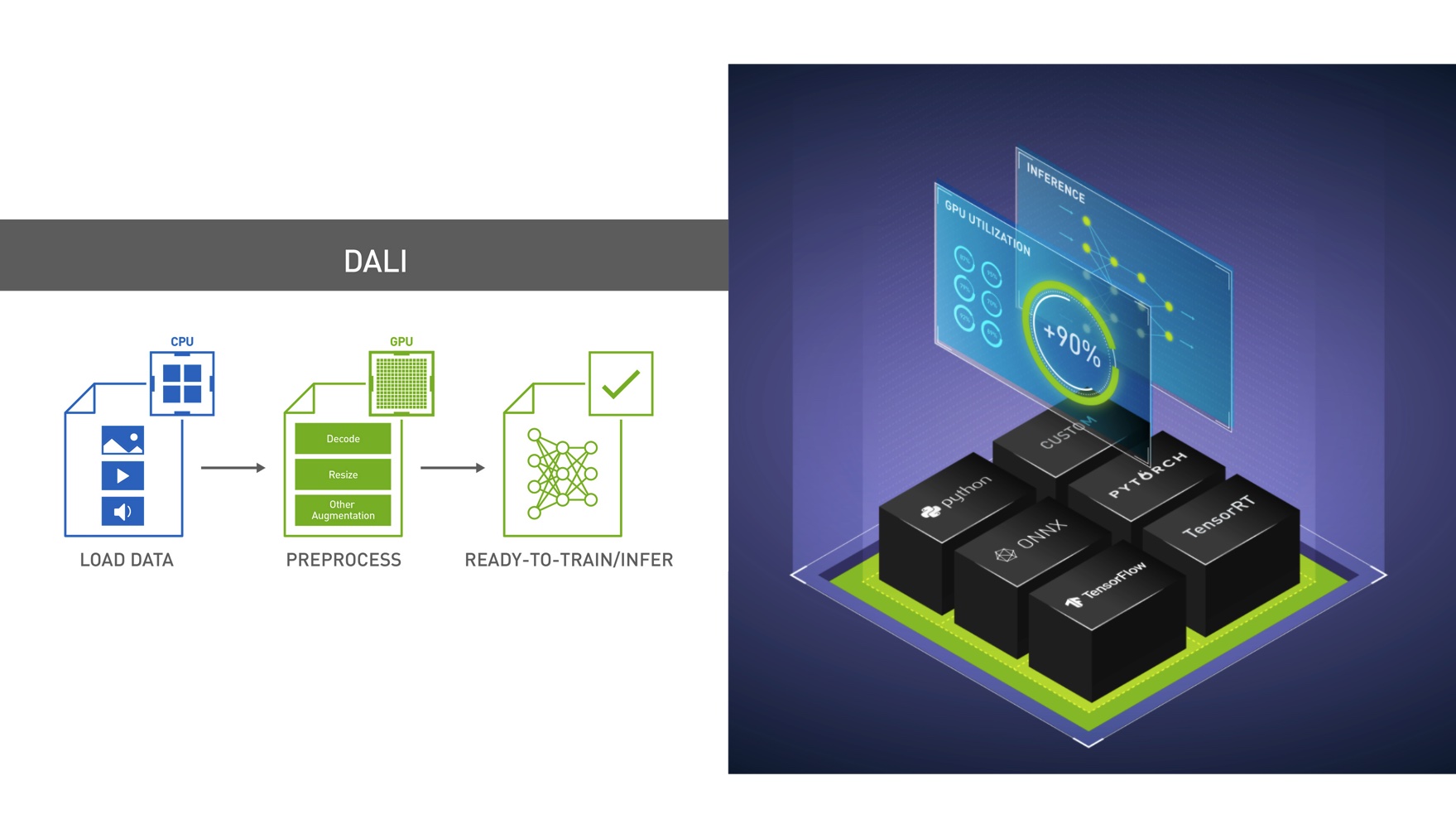

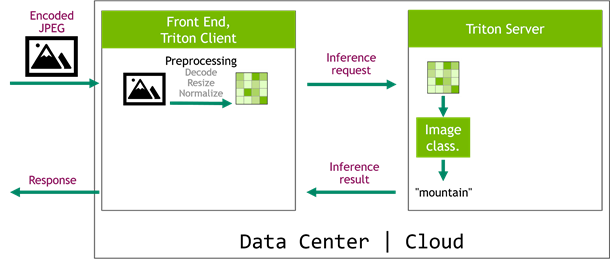

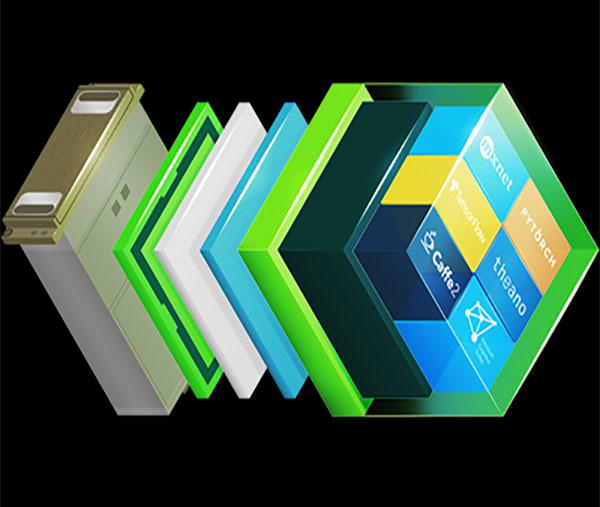

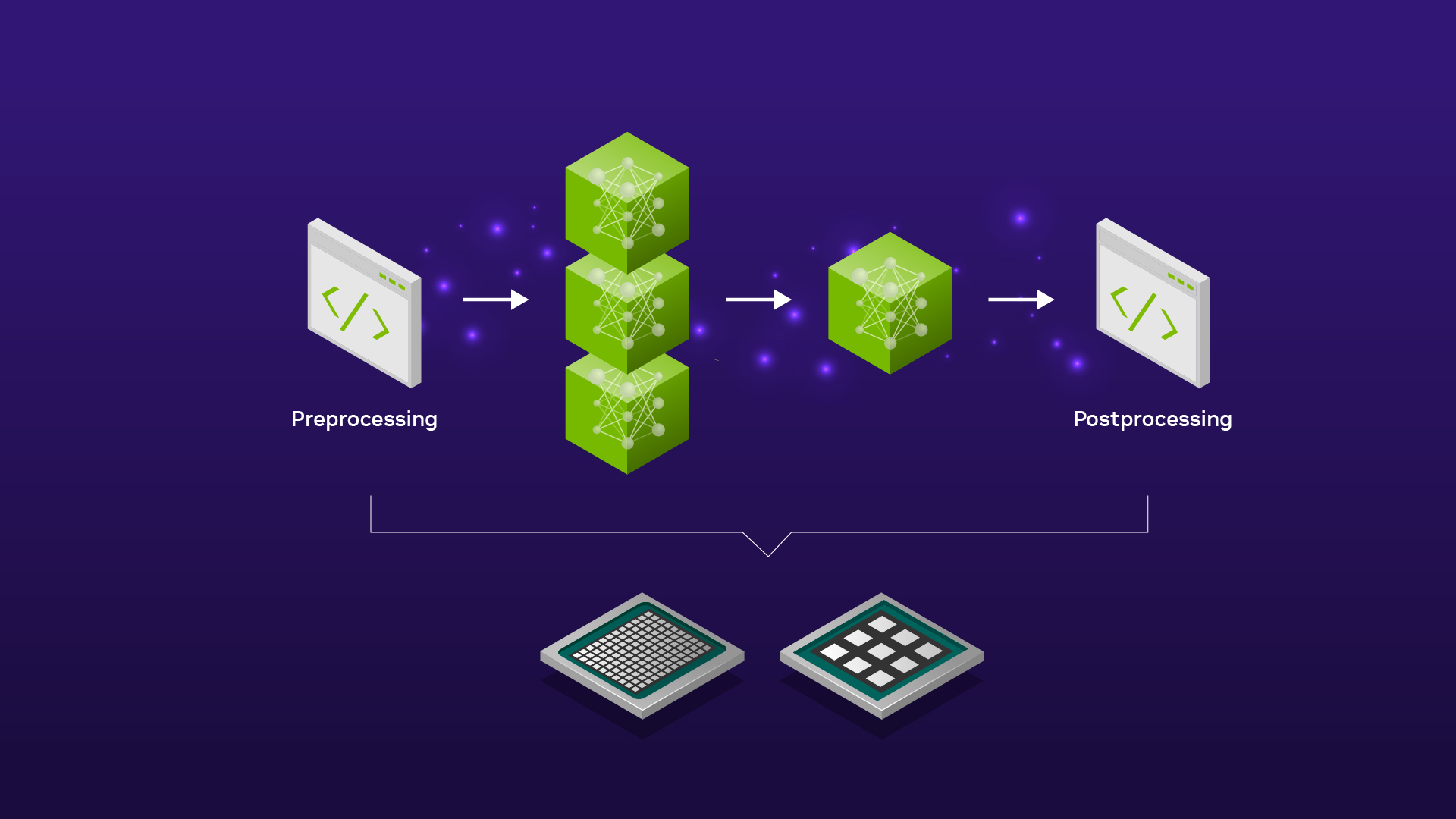

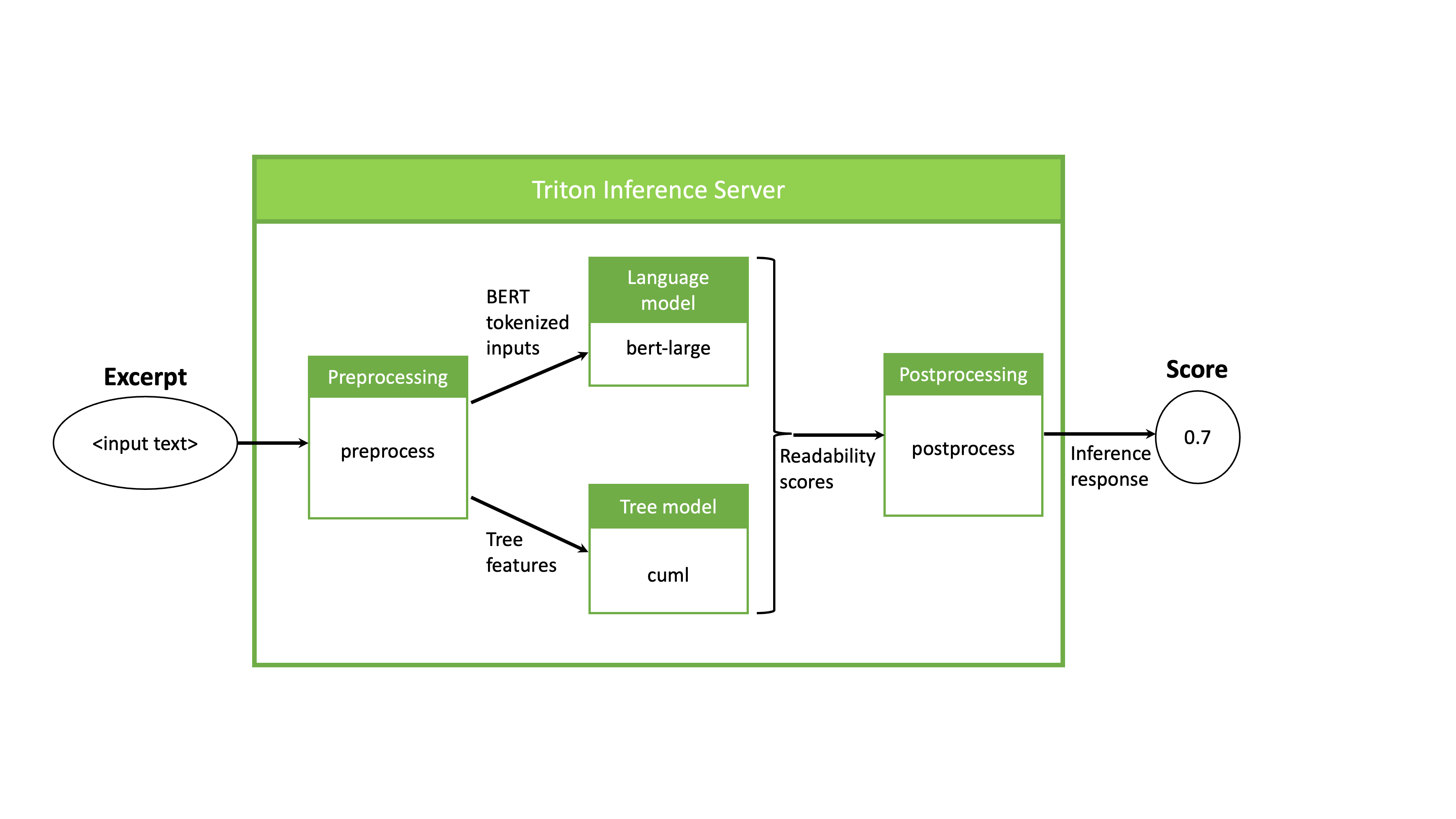

Serving ML Model Pipelines on NVIDIA Triton Inference Server with Ensemble Models | NVIDIA Technical Blog

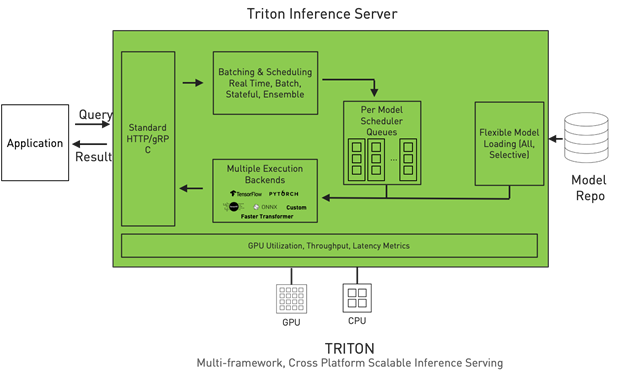

Serving Inference for LLMs: A Case Study with NVIDIA Triton Inference Server and Eleuther AI — CoreWeave

Serving ML Model Pipelines on NVIDIA Triton Inference Server with Ensemble Models | NVIDIA Technical Blog

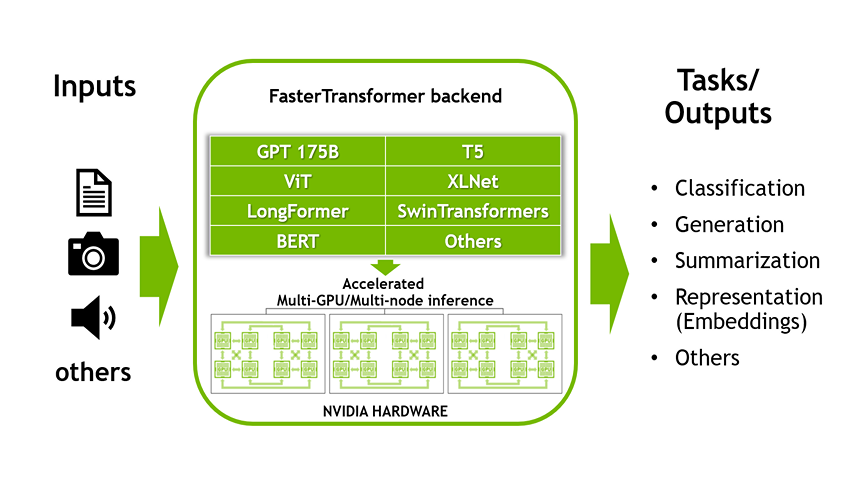

Accelerated Inference for Large Transformer Models Using NVIDIA Triton Inference Server | NVIDIA Technical Blog